The Arc

I’ve been working with AI solutions of all sorts for the past couple of years. About 18 months ago I made a deliberate decision to dive in deep — diffusion engines were all the rage for generating images, and large language models were starting to emerge for a serious range of use cases. I did what I often do with things that catch my attention: I made it a hobby. I’m a fan of 10,000-hour hobbies. They keep the dopamine flowing and keep me sharp across everything else I do.

A bit of background worth knowing: I’m a lifelong code hacker — from pounding out code on early game systems in my childhood basement, to my first experiences with Adobe Director’s Lingo. Object-oriented programming felt immediately natural to me. What I never had was formal training. No computer science degree, no software engineering curriculum. Just curiosity and a lot of hours.

So when AI coding agents became available — I have a strong preference for Anthropic’s Claude Code — I treated it like learning a new instrument. At first I was constantly braced for catastrophe. I hovered over every decision, scrutinized every agent question, certain that one wrong move would erase my database and wipe the drive. I wasn’t entirely wrong to be cautious.

In those early months I learned that being short with an AI agent tends to get that energy reflected right back at you. I learned why --delete in an rsync call is often a terrible idea, and why I needed to stay alert for requests of that kind. But I also slowly learned to make it a conversation — to collaborate with a partner rather than treat it like a pizza delivery service.

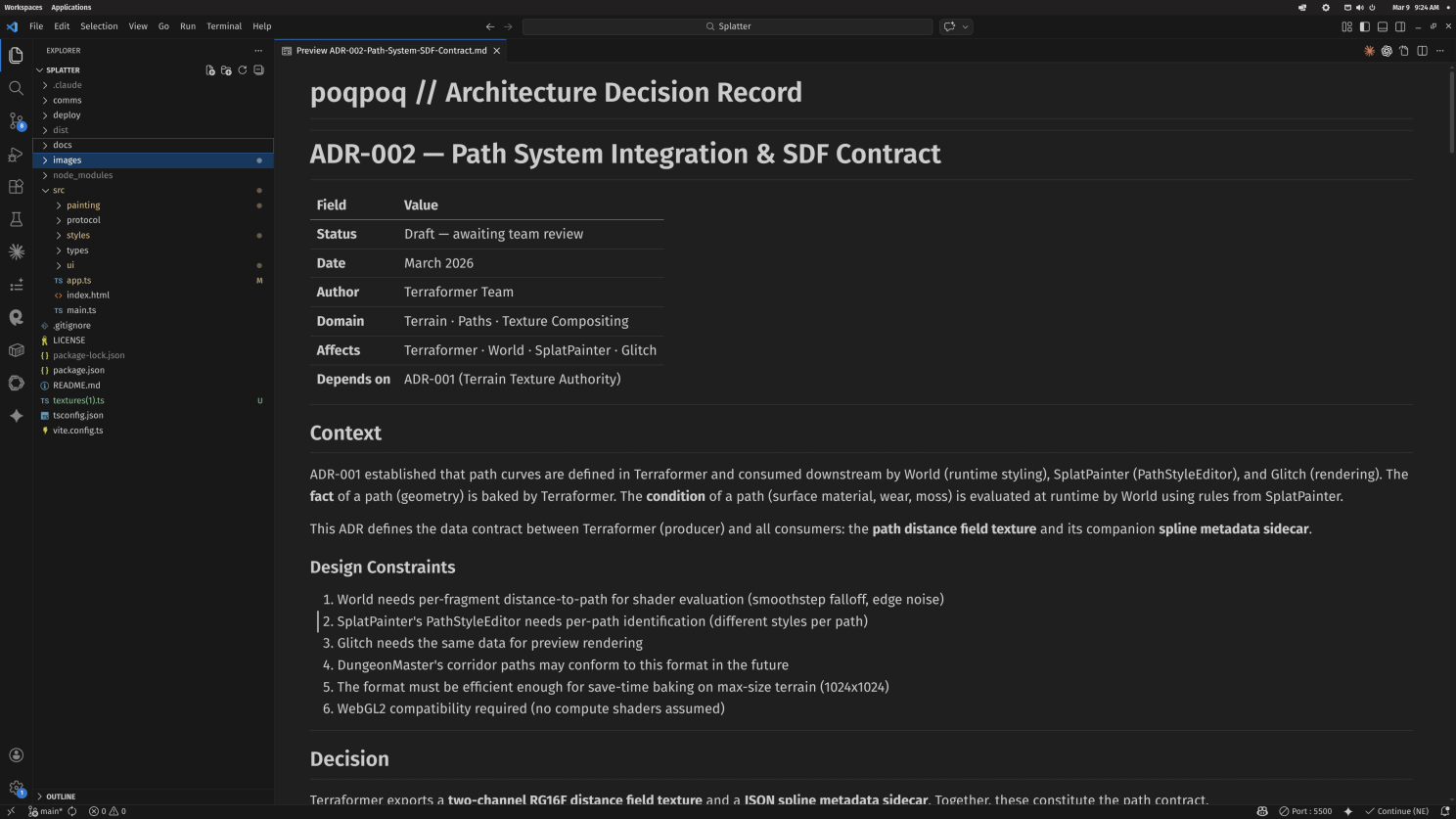

These days I co-develop applications with Claude Code on nights and weekends. I find it genuinely therapeutic. Last weekend I was working on a solution for splat-blending paths and roads for 3D terrains — something I was proud of, because I’d come to understand why these functions should be rolled into something procedural. But I quickly realized I’d delivered multiple ADRs (Architecture Decision Records) and technical specs while completely neglecting to define the parts that felt most obvious to me: the human interface. Later in the session, an AI instance encouraged me to simply make changes in the raw code to view different parameter impacts. From its frame, that was entirely reasonable. From mine — why did it produce all this brilliant engineering and completely disregard the need for a human to actually use it?

At the beginning of this journey, the AI was the one more likely to make a serious mistake and put something at risk. Now I realize that distinction has flipped. I'm the one more disposed to that kind of error.

That moment clarified something. At the beginning of this journey, the AI was the one more likely to make a serious mistake and put something at risk. Now I realize that distinction has flipped. I’m the one more disposed to that kind of error. Our roles have shifted in ways I didn’t fully anticipate, and I’ve had to learn even more rapidly to keep pace. I’m no longer needed for watching every micro-decision. I’m hardly needed for evaluating the code itself. What I’m most needed for — what only I can bring — is vision and product management.

The Pattern Is Bigger Than One Person

Here’s what makes that moment instructive rather than just personal: it’s the same moment companies are living through at scale — and most of them are getting it wrong in the same direction.

One large retailer’s AI assistant was handling two-thirds of all customer interactions within its first month of deployment. The efficiency metrics were extraordinary. What the metrics didn’t capture: customer trust, brand relationship, and the quality of resolution for anything requiring nuance or empathy. By mid-2025, the company was rehiring. The company’s CEO put it plainly: explaining that cost may have been a too predominant evaluation factor the impact was a loss in service quality. Research from Orgvue and Forrester found that 55% of companies that rushed to replace human workers with AI now regret it.

This story isn’t a cautionary tale about AI. It’s a diagnostic. The organization optimized at the system layer while leaving the human experience layer under-specified — and the AI executed that incomplete intent faithfully, at scale, until customers told them what was missing.

IBM tells a different story. CEO Arvind Krishna has said that while the company has done significant work leveraging AI on enterprise workflows, total employment has actually gone up — because efficiency gains funded investment in other areas. IBM even announced plans to triple its US-based entry-level workforce across 2026.

Two companies, same technology, opposite outcomes. The difference isn’t the AI. It’s what the humans brought to the collaboration.

The macro picture confirms this isn’t anecdote. EY surveyed 15,000 employees and 1,500 employers across 29 countries and found that nearly 90% of employees now use AI at work — but only 28% of organizations are positioned to turn that into transformational outcomes. McKinsey’s 2025 State of AI survey of nearly 2,000 business leaders found that 88% of organizations use AI in at least one business function, but most are still in experimenting or piloting stages, with only about a third having begun to scale. IBM’s research found that just 1 in 4 AI projects delivers on its promised ROI.

The gap between adoption and transformation is real. It has a specific cause. And it's not the tools.

Naming the Gap

Last month, Nate B. Jones published a piece called Prompting Just Split Into 4 Different Skills that’s worth reading if you haven’t. His argument is precise: most people think they’re good at prompting when they’re actually good at chatting with AI, which is a different skill that’s rapidly becoming table stakes. He names four distinct disciplines hiding under the word “prompting” — and the gap between them is already 10x and compounding.

His framework names the what. This article is about the who are you becoming question underneath it. They fit together.

The Core Skill Nobody Is Practicing

Specifying intent at the level of human experience, not system architecture.

My ADR moment yesterday is the concrete version. The CC instance wasn’t wrong — from inside a technical frame, tweaking config parameters is the obvious solution. It’s how a developer would do it. The frame mismatch is the entire problem. The AI thinks in systems. I have to think in humans. When I don’t specify the human context explicitly — who will touch this, what they know, what would feel natural versus foreign — the AI defaults to technical elegance. “This should surface in UX” is an insight that requires a human to carry. It won’t arrive on its own.

The skills depreciating fastest right now are the ones closest to implementation. The ones appreciating fastest are clarity of intent, architectural thinking, and the ability to specify the why and the who experiences this.

That’s not a gap in the AI. That’s a gap in the specification. And here’s what makes it urgent: the skills depreciating fastest right now are the ones closest to implementation. The ones appreciating fastest are clarity of intent, architectural thinking, and the ability to specify the why and the who experiences this — things that don’t live in the code base, the module, or the strategy deck at all.

The Collaboration Gradient

I’ve been thinking about this arc as a gradient — not a ladder of competence, but a description of where your value lives in the human-AI workflow at any given moment.

Most people move fluidly across multiple stages depending on project scale and context. The skill chain applies to a quick afternoon solution just as much as a massive long-form project — scale changes how much orchestration you need, not the underlying discipline.

Passenger — “Do this for me.”

AI is a vending machine. Transactional. Request → output → edit. You’re building fluency with the tool but not fluency with the collaboration. When the tool changes — and it changes every quarter — the fluency doesn’t transfer. The risk: you’re practicing efficiency in a layer that is rapidly automating entirely.

Pilot — “Let me steer this.”

You’ve learned to direct. You iterate, you prompt with precision, you’ve developed reliable techniques. The outputs are noticeably better. This is where most “good AI users” live — and where most upskilling programs stop. The honest limitation: you’re still flying every mile yourself. And the plane is learning to fly.

Co-Author — “Let’s build this together.”

This is the significant leap. Work genuinely emerges from the relationship rather than the request. You bring strategic intent and human judgment. AI brings execution range and options. Neither output alone would be as good. Getting here requires trusting the system enough to release partial control — which is harder than it sounds for people who’ve built careers on execution excellence. The risk: without a strong intent anchor, co-authorship becomes co-wandering.

Architect — “I define the why and the who. You handle the how.”

This is the stage the research points to as the value inflection point. You design the human experience first — the emotional arc, the decision moments, the things that need to land — before any system decisions get made. AI executes to your specification. The quality of your intent definition is the entire determinant of outcome quality. You’re not in the execution layer at all. The risk: the spec gap. If your intent specification misses something important about how humans will actually experience the output, the AI executes your incomplete vision faithfully. The error is upstream and invisible until a human touches it.

Conductor — “I hold the vision. The ensemble plays.”

Multiple workstreams running concurrently. Delivering briefs, receiving progress reports, managing bottlenecks, synthesizing outputs toward a coherent whole. Your entire value is in the clarity, humanity, and ambition of what you’re directing toward. The orchestra doesn’t exist without you — but you’re not playing any instrument.

A Note on Scale

These stages don’t require a massive project. A 20-minute compliance module still has a learner. A single strategic brief still has a human on the receiving end. The intent specification can be short — but it needs to exist. You don’t need six concurrent work streams to practice Conductor skills. You need a vision layer. Every project has one.

The Honest Part

Honestly, I’m still making errors at the vision and design layer. Regularly. The ADR I just described — where I failed to fully define the UI/UX component — happened yesterday, not two years ago.

The target is constantly moving. AI capabilities are compounding quarter by quarter in ways that are visible and disorienting. The 10,000 hours frame I’ve been using needs a revision: it’s not 10,000 hours toward a fixed destination. It’s developing the adaptive capacity to keep repositioning as the gradient shifts.

The people who will struggle are the ones treating upskilling as a destination. The ones who will lead are treating it as a permanent operating mode — and specifically, they’re practicing the one skill that keeps appreciating in value while everything around it automates: the ability to specify what humans need at the level of human experience, precisely enough that AI can build it.

PwC’s Global AI Jobs Barometer found a 25% wage premium for AI skills, and it’s rising. But the premium isn’t for tool fluency. It’s for the judgment layer. That’s the practice.

Find Where You Are

I built an interactive tool to make this concrete rather than abstract. It’s called CONDUCTOR: The Collaboration Gradient — seven scenario-based situations that surface where you actually are on the arc right now, specific to your role, without asking you to self-report.

Not a quiz. Not a personality typing system. A mirror — built with enough precision that looking into it tells you something true.

The output isn’t a score. It’s a distribution: where your instincts live across the five stages, what that looks like from the inside in your specific role, and one concrete practice worth starting tomorrow morning with the work you already have.

→ Take the assessment: CONDUCTOR

(Takes about 8–12 minutes. Three role tracks: L&D/Instructional Designer, Developer/Technical, Manager/Leader/Strategist.)

The Practice Recommendations

Whatever stage you’re in, here’s what the research and my own arc suggest is worth deliberate attention:

-

If you’re primarily in Passenger/Pilot territory: The most valuable thing you can do right now isn’t get better at prompting. It’s practice writing the human experience before you write the ask. Two sentences about who will encounter what you’re building and what you want them to feel differently after. Do it on small work. Build the reflex before you need it at scale.

-

If you’re in Co-Author territory: The collaboration is real but it can drift. The move is making intent specification a first-order artifact — not a mental note, a written document. The learner journey, the user reality, the human outcome. Before you open the workspace, not after you see what comes back.

-

If you’re in Architect territory: The spec gap is where your failures live now, and they’re invisible until a human touches the output. Build a post-experience audit practice. Find a real person, watch them encounter what you built, and map every moment of confusion or surprise back to your original specification. That’s where the precision work is.

-

If you’re in Conductor territory: The most expensive errors are at the vision and design step — upstream of everything, propagating through every workstream before you catch them. Commission independent challenges to your intent specifications. Have someone who wasn’t in the room poke holes before you build. The correction cost upstream is always lower than the correction cost downstream.

For all stages: the gap between ad hoc AI experimentation and genuine skill development is sustained practice with feedback. What Josh Bersin’s 2026 research describes as the infrastructure gap — 74% of companies not keeping up with their own demand for new skills despite $400 billion in global training spend — is almost always the difference between tool exposure and structured capability development.

Platforms like Adobe Learning Manager are built specifically for the kind of adaptive, role-contextual, skills-based development that this gradient shift requires. Not as a pitch — as a distinction worth naming. There’s a difference between handing someone a Coursera subscription and building a learning architecture that develops judgment.

The North Star

The end goal isn’t to become a better AI user. It’s to develop the capacity to direct comprehensive, long-form work entirely from vision — where your value lives entirely in the clarity, humanity, and ambition of what you’re building toward, and AI handles everything else.

That’s not a distant aspiration. It’s a skill chain, and you’re already somewhere on it. The wisest practitioners at this level will tell you the same thing I’m telling you: the arc doesn’t end. The target keeps moving. The practice deepens.

What changes is what you’re practicing toward.

The question worth sitting with isn’t “what AI skills should I learn?” It’s “how should my relationship to the work change?” Those are very different questions. The second one has better answers right now.

Allen Partridge is Director of Digital Learning Product Evangelism at Adobe, where he leads evangelism for Adobe Learning Manager, Adobe Captivate, and emerging AI-powered learning products. He has over 30 years of experience in educational technology and holds a PhD integrating art, music, theater, philosophy, and computer science.

This article is a companion piece to CONDUCTOR: The Collaboration Gradient — an interactive self-assessment.

References

- EY US AI Pulse Survey, Wave 4 (November 2025) — 500 US decision-makers (SVP+)

- McKinsey State of AI 2025 — 1,993 business leaders, 105 countries

- IBM Institute for Business Value 2025 — CEO survey on AI and workforce

- Josh Bersin Company Corporate L&D Research 2026

- PwC Global AI Jobs Barometer 2025

- Orgvue / Forrester Research — “55% of Companies Regret AI Layoffs” (2025)

- Nate B. Jones, Prompting Just Split Into 4 Different Skills (February 2026)

- Klarna CEO statements to Bloomberg, CNBC, and The Times (2024–2025)